Get ahead

VMware offers training and certification to turbo-charge your progress.

Learn moreIt's a pleasure to announce that we have just released the GA versions of a variety of Spring Data modules. With this release we continue to manifest the commitment of SpringSource to provide Java developers with tools to work with state-of-the-art persistence technologies. In this blog post I'd like to give you detailed insight into what the release includes, why we decided for a release train and a brief outlook into what the next steps on the Spring Data roadmap are.

We were working hard on minimizing these issues but eventually came to the conclusion that it makes sense to coordinate the releases much more and sync up minor releases of the modules. The general Spring Data release now ships with the following participants:

We introduce @Enable(Jpa|Mongo|Neo4j|Gemfire)Repositories that are 1:1 equivalents of the XML repositories namespace element. So what had to be configured like this in former versions (using JPA as example).

<jpa:repositories base-package="com.acme.repositories" />

Can now be achieved in Spring JavaConfig configuration classes by using the @EnableJpaRepositories annotation.

@EnableJpaRepositories(base-package = "com.acme.repositories")

class ApplicationConfig {

}

With this style of configuration we enable completely XML-free application configuration across the store implementations. More advanced examples of annotation configuration usage can be found in the sample code for the recently released Spring Data book written by the Spring Data team members. The code is on GitHub and uses all the new features of the latest Spring Data release as well as the most recent Spring 3.2 milestones. Consider this repository as a canonical sample repo for Spring Data related code samples going forward.

The integration of @Enable… annotations for repositories required us to raise the Spring dependency to the 3.1 line. Still all the rest of the codebase is fully compatible with the Spring 3.0 branch. So the modules shipped with the release all depend on Spring 3.1.2 by default. If you really need to work with 3.0.7, manually define the Spring dependency in your project's pom.xml to enforce the older version to be used.

GridFsTemplate (see this section of the reference docs for details).

class MongoConfig extends AbstractMongoConfiguration {

// …

@Bean

public GridFsTemplate gridFsTemplate() {

return new GridFsTemplate(mongoDbFactory(), mappingMongoConverter());

}

}

class GridFsClient {

public static void main(String… args) throws Exception {

ApplicationContext context = new AnnotationConfigApplicationContext(MongoConfig.class);

GridFsOperations operations = context.getBean(GridFsOperations.class);

File file = new File("myfile.txt");

operations.store(file.getInputStream(), "myfile.txt");

GridFsResource[] txtFiles = operations.getResources("*.txt");

}

}

If you'd like to use Spring Data MongoDB from within a JavaEE 6 environment you can now do so as we ship a CDI extension that allows injecting a MongoDB based Spring Data repository into a CDI managed bean using @Inject (DATAMONGO-356).

Beyond that we've received two community contributions by Maciej Walkowiak and Patryk Wasik. Maciej implemented support for JSR-303 validation by leveraging the persistence events we trigger (DATAMONGO-36). Patryk enabled optimistic locking by adding an @Version annotation as well as the necessary tweaks to our persistence mechanism to throw an exception in case document modifications have been done behind ones back (DATAMONGO-279).

@Indexed(unique=true) which will allow the Neo4j mechanisms for creating unique nodes and entities to kick in.

We also invested a lot in making working with relationships easier and more comprehensive, you can now populate and save relationship-entities in the same ways as node-entities. We also allow more fine-grained control of relationship-type. It can now also be set on the @RelationshipEntity(type="REL_TYPE") annotation or in a field annotated with @RelationshipType to be used on a per-instance basis. Due to popular demand, we now allow @RelatedToVia annotated fields to be constrained by target field type.

With this release the support for storing type-hierarchies in the graph was revamped so you can use @TypeAlias("myType") for saving precious space in properties and indexes. The polymorphic reads for aliased types now also work as expected.

An useful addition for audits, validation and other cross-cutting concerns are the new lifecycle events. Like in the other modules ApplicationContextListener can now listen for (Before|After)SaveEvents. We also refactored the internal infrastructure, so that you can now directly create a Neo4jTemplate around a GraphDatabaseService even without an application-context set-up.

Something we've put a lot of effort into, was improving the handling of user-defined or finder-method-derived cypher queries. This is an area that will also see a lot of investment in the future of the Spring Data Neo4j module. Spring Data Neo4j's 2.1 release supports the recently released Neo4j 1.8 version and works with 1.7 as well.

The Spring Data Gemfire module will also be shipped alongside the upcoming Gemfire 7.0 release to continue the mission to simplify developing Java applications using data grids.

All it takes is registering the RepositoryRestExporterServlet in your application or let your Spring MVC DispatcherServlet configuration include the RepositoryRestMvcConfiguration JavaConfig class. By default, this will cause a resource exposed for every Spring Data repository in your application context. The resources can be discovered by following links exposed in a core resource.

Assuming you have two repository interfaces CustomerRepository and ProductRepository. You can now go ahead and add the following setup code to your Servlet 3 WebApplicationInitializer:

DispatcherServlet servlet = new RepositoryRestExporterServlet();

Dynamic dispatcher = container.addServlet("dispatcher", servlet);

dispatcher.setLoadOnStartup(1);

dispatcher.addMapping("/");

Running the app inside e.g. Jetty will give you this:

$ curl -v http://localhost:8080

{ "links" : [ {

"rel" : "product",

"href" : "http://localhost:8080/product"

}, {

"rel" : "customer",

"href" : "http://localhost:8080/customer"

}]}

Clients can now follow these links to access products and customers, explore their relationships to other entities (hypermedia-driven in turn) and execute queries declared through query methods in there repository interfaces. Currently, only JPA based repositories are supported but we're going to add support for all the other stores in upcoming versions.

There are detailed options to configure which resources and which HTTP methods get exposed, extension points to manipulate the returned representations with custom links to enrich your API beyond plain CRUD operations. To find out more about the project check out it's reference documentation or the relevant chapter of the Spring Data book we wrote with O'Reilly (read more on that below).

Besides the store implementation modules lead by SpringSource employees we're starting to see implementations lead by the community popping up recently. Neale Upstone has just released version 1.0 of the FuzzyDB module and the Spring Data Solr module led by Christoph Strobl is close to a first milestone.

If there's any feature you'd like to see in Spring Data modules, any feedback to the existing ones, now is the time to raise your voice in the the Spring JIRA.

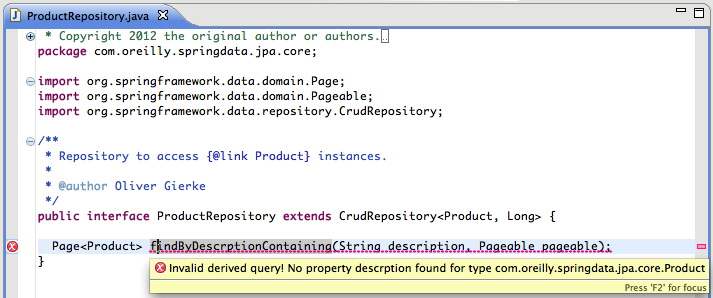

Query method validation

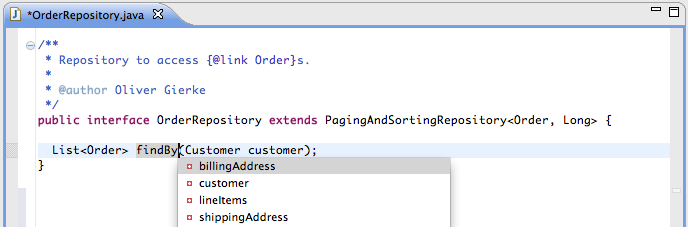

Code completion support for query methods